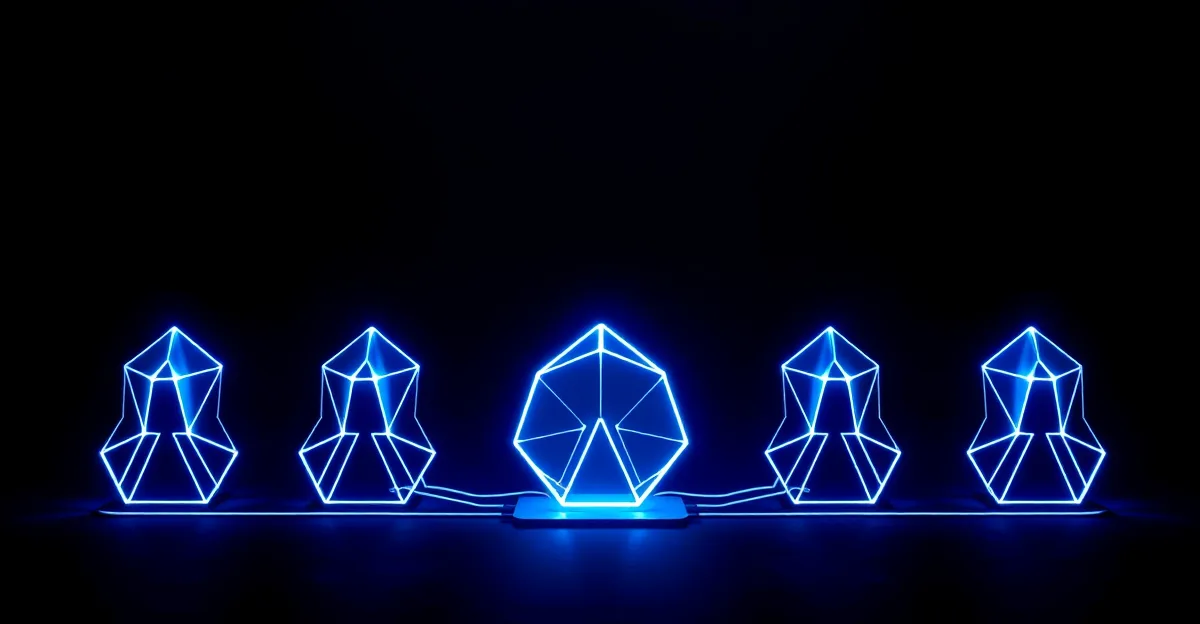

Intent by Augment Code

Spec-driven multi-agent orchestration — describe what you want, then run six parallel AI agents to build it

Senior developers and engineering leads who need to delegate large, well-scoped features to multiple parallel AI agents

Non-technical founders or exploratory builders — Intent requires upfront spec discipline and punishes vague prompts

Intent by Augment Code in context: product setup, workflows, and operations

Intent is Augment Code’s attempt at a genuinely different question: not “how do I help one developer write code faster,” but “how do I let a developer run a fleet of AI agents working on different parts of a problem simultaneously.” It launched in public beta on February 26, 2026, and has attracted serious attention from engineering leads and tech-heavy founders who’ve outgrown single-agent tools like Cursor and Claude Code.

The short version: Intent works well for developers who know exactly what they want and are willing to write it down first. It doesn’t work at all for the exploratory, iterative, “let’s see what this does” style of vibe coding that non-technical founders typically use. That’s a narrow audience — but within that audience, it’s doing something the competition isn’t.

How it actually works

The core mechanic is the living specification. Before Intent runs any agents, you write — or have it help you draft — a structured spec: what the feature should do, what success looks like, what existing code it touches. That spec isn’t just documentation; it’s the operating instructions the agents share.

Once you confirm the spec, a Coordinator Agent breaks it into discrete tasks with explicit dependencies. Up to six Specialist Agents then execute in parallel, each working in its own isolated Git worktree so they can’t collide. The specialists include agents for investigation, implementation, verification, critique, debugging, and code review. When they finish, a Verifier Agent checks the output against the original spec before anything lands in your review queue.

The practical result is that a feature you’d normally block a developer for three days on can come back in an hour — implemented, reviewed, and flagged with diffs for your inspection. The quality is inconsistent for complex or ambiguous tasks but noticeably good for well-scoped, greenfield features with clear acceptance criteria.

The context engine is the real differentiator

Most coding agents rely on vector search to surface “relevant” code context. Augment’s Context Engine builds a semantic dependency graph of the entire codebase — it understands that a change to your billing model propagates to your subscription UI, your webhook handler, and your admin dashboard, not just to the nearest file. This is particularly valuable on larger codebases where agents in competing tools regularly “forget” about code that’s architecturally important but textually distant from the task at hand.

In independent testing on a TypeScript monorepo with 180,000 lines across four services, reviewers found the Context Engine performed meaningfully better than Cursor or Claude Code on tasks that required understanding cross-service dependencies. On simple, self-contained tasks, the difference was negligible.

Pricing

Intent runs on Augment’s credit system. Plans start at $20/month for the Indie tier (40,000 credits), $60/month for Standard (130,000 credits), and $200/month for Max (450,000 credits). There’s no separate Intent pricing — the credits are the same pool used by Augment’s CLI and IDE extensions.

What that means in practice: a small task with ~10 tool calls costs around 300 credits. A complex multi-agent task with ~60 tool calls costs around 4,300 credits. On the Standard plan, you can run roughly 30 complex tasks per month before hitting the ceiling, which is usually enough for a team shipping one or two features per week through Intent.

Credits don’t roll over, and the Indie plan’s 40,000 credits can evaporate quickly if you’re running Intent on substantial tasks. Evaluate on the Standard plan before committing.

What it’s like day-to-day

The experience is Mac-only in public beta (Windows is on the waitlist). The app feels like a task manager for AI agents rather than an IDE — you write or review specs, submit them to Intent, and then watch tasks complete in a sidebar while you work on something else. It supports BYOA (Bring Your Own Agent): if you have a Claude Code or Codex subscription, you can use those models directly inside Intent without additional model licensing.

Intent requires discipline that most vibe coding tools deliberately remove. If your task spec is vague, Intent will produce vague output — and unlike Cursor or Lovable, it won’t ask follow-up questions to clarify. That’s a deliberate design choice: Intent assumes you know what you want and rewards that knowledge with speed and parallelism. For developers who work that way, it’s genuinely impressive. For everyone else, it’s frustrating.

Limitations

Public beta means rough edges. Reviewers have noted unreliable task status updates, occasional agent stalls on complex multi-service tasks, and a spec editor that still feels like a first version. The parallel architecture also makes debugging tricky: when six agents are working at once, figuring out which one introduced a regression isn’t obvious.

The macOS-only constraint is a hard blocker for Windows-first teams.

Who this is for

Intent is for developers who already use Claude Code or Cursor and feel like the bottleneck is supervision time, not capability. If you’re spending your day reviewing AI output, approving changes, and re-queuing the next task manually, Intent is solving your actual problem. If you’re a non-technical founder building your first MVP, nothing about Intent’s workflow is designed for you — start with Lovable or Bolt and come back here when you have a technical co-founder on the team.

Anthropic's terminal-native AI agent for deep, agentic work on real codebases

Open-source agentic coding assistant for VS Code — bring your own model, see every move

The first AI software engineer — autonomous, capable, and genuinely expensive